OS Compliance: From Truth to Automated Fix

The Drift Nobody Tracks Until It Breaks Something

Most teams know what OS version each device should be running. That information lives in NetBox, in a spreadsheet, or in someone's head. What they don't know — not with any real confidence — is what version is actually running right now, across every device, on every platform. Checking means SSHing into each box, parsing output that looks different depending on whether it's Arista, Juniper, or Cisco, and then cross-referencing that against the inventory. Nobody does this weekly. Most teams do it after something goes wrong.

Why This Matters

A device one minor version behind its approved baseline is easy to dismiss. But that version difference can mean a missing security patch, an unfixed memory leak, or a behavior that changed in a dot release and breaks something downstream. The harder problem isn't identifying the gap — it's the work that follows: figuring out which devices are affected, opening a ticket for each one, coordinating with the team that runs the playbooks, and confirming it actually got done. That chain of handoffs is where things stall. This workflow handles it without the chain.

How It Works

Two workflows handle the full cycle. The first one audits and signals. The second picks up from there and remediates. They run on independent schedules and hand off through ServiceNow — a Catalog Task created by Workflow 1 has to be explicitly approved before Workflow 2 will touch the device.

Workflow 1 — OS Version Compliance Audit

Workflow 1 runs every day at 08:00. It connects to all devices in Pool Alpha, compares what's running against what NetBox says should be running, and creates a ServiceNow Catalog Task for every device that's out of line. The email report goes out regardless of how many mismatches it finds.

- SSH to each device: Connects to the target pool via Netmiko and runs "show version". Output is parsed per-platform — Arista, Juniper, and Cisco each format it differently.

- Pull the expected version from NetBox: Reads "custom_fields.os_version" for each device. That field is the baseline. If it's not set, the device gets flagged separately.

- Compare and classify: A Python node does the diff. An exact match means the device is clean. Anything else goes on the non-compliant list.

- Open a ServiceNow incident per device: High priority, assigned to NetOps, with the actual version, the desired version, and the device hostname in the description. No vague titles.

- Generate the CSV and send it: A timestamped report goes out by email with the full audit result — every device, every version, every status. Useful even when everything passes.

Workflow 2 — ServiceNow OS Upgrade & Notify

Workflow 2 runs every 4 hours (00:00, 04:00, 08:00, 12:00, 16:00, 20:00 server timezone). Each cycle queries ServiceNow and processes whichever Catalog Tasks are both Approved and Open at that moment. If no tasks match, the run completes with no action. The workflow doesn't touch a device unless its task has been explicitly approved.

- Query ServiceNow for open OS Upgrade tickets: Pulls only incidents in Open state with "OS Upgrade" in the description. The device list comes from there — nothing hardcoded.

- Build the Ansible inventory on the fly: A processing node extracts hostnames and IPs from the ticket data and constructs the inventory at runtime. No static hosts file to maintain.

- Run the playbook: Executes against each device using its stored credentials from the vault. The playbook path is "/playbooks/save_running_config.yaml".

- Close the tickets: On successful execution, each ServiceNow incident gets resolved. The audit trail in ITSM reflects what actually happened, not just what was planned.

- Send the results: Email with a per-device breakdown — which ones passed, which failed, and what the Ansible output was for each.

The Interactive Session with Flow Weaver

Neither workflow required writing a single line of YAML or defining service connections manually. The engineer described what they needed in plain language — target pool, NetBox field, ServiceNow priority, email — and Flow Weaver asked for whatever was missing before building the graph. Two conversations. Two production-ready workflows.

Workflow 1 interactive session

Workflow 2 interactive session

NetBox Defines the Baseline

Workflow 1 doesn't carry any hardcoded version expectations. It reads "custom_fields.os_version" from NetBox for each device in scope and treats that as the approved target. Whatever SSH returns gets compared against it. If the field isn't populated for a device, that gets flagged too, because a missing baseline is its own kind of drift.

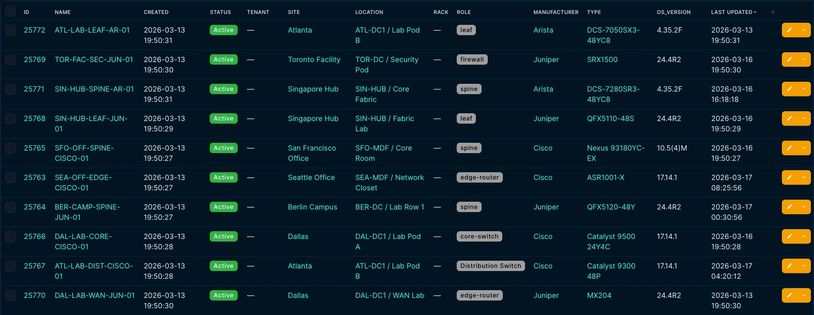

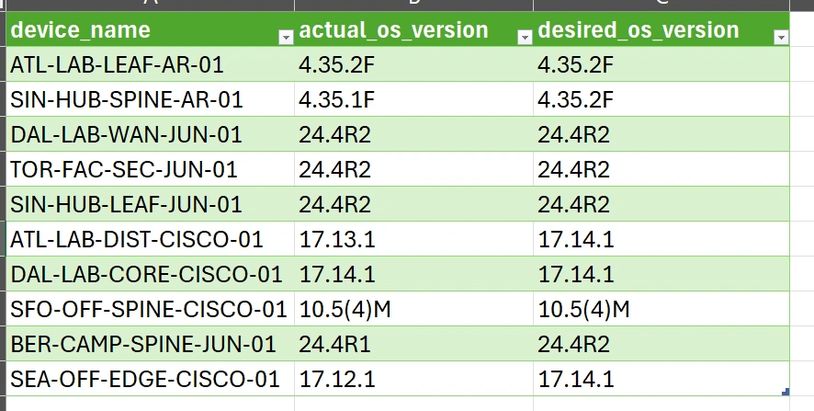

The screenshot below shows the device list with both the current and desired version fields

visible. That's the starting point for every run.

Setting Up the Pool and the Schedule

Before a workflow runs against anything, it needs to know two things: which devices to target, and when to run. Both are configured inside Flow Weaver — not in a separate config file, not in the playbook, and not in a shared doc that someone has to remember to update.

Flow Weaver — Pool Alpha with 10 members across Arista, Juniper, and Cisco

The pool configuration defines the device scope. Pool Alpha contains the ten devices across Arista, Juniper, and Cisco that the audit targets. The pool is defined once and reused across workflows. Adding or removing a device from the pool updates every workflow that references it.

Flow Weaver — scheduling: WF1 daily 08:00, WF2 every 4 hours

The scheduling screenshot shows both jobs active. Workflow 1 runs daily at 08:00. Workflow 2 runs every 4 hours. Each fires independently — running the audit doesn't trigger remediation, and running remediation doesn't require a fresh audit first.

Flow Weaver — execution history showing completed runs for both workflows

What Runs on the Device

Ansible Playbook

- Platform detection — reads ansible_network_os and aborts immediately if the platform isn't one of eos, ios, or junos. No silent failures on unknown hardware.

- Pre-upgrade version check — runs show version (or the Junos equivalent), parses the output with platform-specific regex, and skips the device if it's already on the target version. The desired_os_version value comes from the inventory Workflow 2 builds at runtime from the ServiceNow task data — which in turn came from NetBox.

- Image transfer — copies the OS image from the staged file server to device flash. Junos uses junos_package with validation enabled before any write happens.

- Boot configuration and reload — sets the boot statement and issues the reload command per platform. Connection drops on reload are expected and handled with ignore_errors: true. After reload, the playbook waits for SSH to come back on port 22 before continuing.

- Post-upgrade verification — runs show version again and compares the result against desired_os_version. If they don't match, the task fails explicitly with the version delta in the error message. No ambiguous pass/fail states.

Flow Weaver — manual test run against lab device node-l1 prior to production scheduling (trigger: Regular Run · runtime: 2026-03-15 15:52 · upgrade_status: complete · os_version: 17.14.1)

What Came Back From Workflow 1

Ten devices across three platforms — Arista EOS, Juniper Junos, and Cisco IOS XE. Four of them were running something other than what NetBox said they should be. Each of the four got a ServiceNow ticket. The other six were logged as clean. The CSV below is the exact output from that run.

Setting Up the Pool and the Schedule

Devices 0–3 result array

Devices 4–7 result array

Devices 8–9 result array + run summary

Ticket Lifecycle — Created, Approved, Closed

Workflow 1 created one Catalog Task per non-compliant device. Before Workflow 2 touches any of them, each task has to go through an approval step. The activity log in every ticket shows the same sequence: Workflow 1 creates the task with Approval: Requested and State: Open. A reviewer changes the approval to Approved. On the next 4-hour cycle after that approval, Workflow 2 picks it up, runs the playbook, and sets the task to Closed Complete.

The screenshots below show each of the four tasks in both states — open as Workflow 1 left them, and closed after Workflow 2 finished. These are the actual Catalog Tasks from the test run, with the hostnames, timestamps, and state transitions that ServiceNow captured.

The four devices that triggered tasks were ATL-LAB-DIST-CISCO-01 (IOS XE, one minor behind), SIN-HUB-SPINE-AR-01 (Arista EOS, one dot release behind), BER-CAMP-SPINE-JUN-01 (Junos, R1 instead of R2), and SEA-OFF-EDGE-CISCO-01 (IOS XE, two minor versions behind — the largest delta in the run).

ATL-LAB-DIST-CISCO-01:

Open. Approval: Requested

ATL-LAB-DIST-CISCO-01:

Closed Complete . Approval: Approved

SIN-HUB-SPINE-AR-01:

Open. Approval: Requested

SIN-HUB-SPINE-AR-01: Closed Complete. Approval: Approved

BER-CAMP-SPINE-JUN-01:

Open. Approval: Requested

BER-CAMP-SPINE-JUN-01: Closed Complete. Approval: Approved

Automated Remediation

Workflow 2 in Action — Per Device

Workflow 2 runs every 4 hours. Each cycle queries ServiceNow and processes whichever Catalog Tasks are Approved and Open at that moment. In this run, the four tasks were approved individually over the course of March 16–17, so Workflow 2 processed one device per cycle across four consecutive runs. The execution history confirms it: four separate completions, each showing 1/1 with 0 failures.

No inventory file was modified between cycles. No hostnames were hardcoded anywhere in the workflow definition. Each run extracted the target device directly from the approved task in ServiceNow and executed the playbook using the credentials already stored in the vault for that host.

Once the playbook confirmed a successful upgrade, the Catalog Task was set to Closed Complete automatically. The next WF1 run on March 17 at 08:00 — which took seven minutes instead of forty-one — confirmed the result: all ten devices came back In Sync.

The screenshots below show the Flow Weaver output per device after each playbook run: device name, post-upgrade OS version, and upgrade status.

Keeping the device list dynamic matters more than it sounds. A static hosts file tied to a workflow tends to drift — devices get renamed, decommissioned, or reassigned, and nobody updates the file. Pulling targets from approved ServiceNow tasks means the playbook runs against what ITSM says is outstanding and authorized, not against a list that was accurate three months ago.

The 4-hour cadence means that once a task is approved, the upgrade happens within the same business day — without anyone scheduling it manually.

ATL-LAB-DIST-CISCO-01 — upgrade_status: complete · os_version: 17.14.1

SIN-HUB-SPINE-AR-01 — upgrade_status: complete · os_version: 4.35.2F

BER-CAMP-SPINE-JUN-01 — upgrade_status: complete · os_version: 24.4R2

SEA-OFF-EDGE-CISCO-01 — upgrade_status: complete · os_version: 17.14.1

Reports That Actually Go Out

info@networkingdev.com — OS Compliance Report (WF-1984)

info@networkingdev.com — Ansible Remediation Summary (WF-1991)

info@networkingdev.com — Ansible Remediation Summary (WF-1991)

Both workflows send an email at the end of each run. Workflow 1 sends the compliance CSV (OS_incident_report.csv) as an attachment — every device, its version status, and whether a Catalog Task was opened.

info@networkingdev.com — Ansible Remediation Summary (WF-1991)

info@networkingdev.com — Ansible Remediation Summary (WF-1991)

info@networkingdev.com — Ansible Remediation Summary (WF-1991)

Workflow 2 sends an email with OS_incident_complete_report.csv attached — the list of devices whose OS was updated in that cycle, generated by the playbook report block. The screenshots below are from the actual runs.

Conclusion

Everything in One Workflow

The first version ran the full cycle — audit, ticket creation, and remediation — in a single workflow. It worked, but it created a scheduling problem: running a compliance check also meant running the playbook, every time. There was no way to audit without also remediating, or to re-trigger remediation without going through the full audit again. For teams that want to review and approve tasks before anything touches the devices, that's a blocker.

A Report That Means Something

Most compliance reports are snapshots — accurate when they're generated, obsolete a week later. What makes this pipeline different is that the report from Workflow 1 doesn't just describe what was found; it creates work items in ServiceNow that track each discrepancy until it's resolved. Workflow 2 closes those items once the playbook confirms the fix. The paper trail goes from detection to approval to verification without anyone manually updating a status.

That matters when you're trying to demonstrate compliance to a change advisory board, an auditor, or your own management. The data is there — timestamped, complete, and pulled directly from the systems that did the work.

Subscribe

Sign up to hear from us about solutions and new releases.

Copyright © 2026 NetworkingDev - All Rights Reserved.